The embarrassing-and-now-deleted tweet is a very tempting thing, apparently — if human folly doesn’t provide them (it often does), many people are tempted to help.

Back in the spring, we showed you how easy a fake tweet is to create, using nothing more complicated than the browser you already have. If you doubt this, try it yourself. (Chrome is best.)

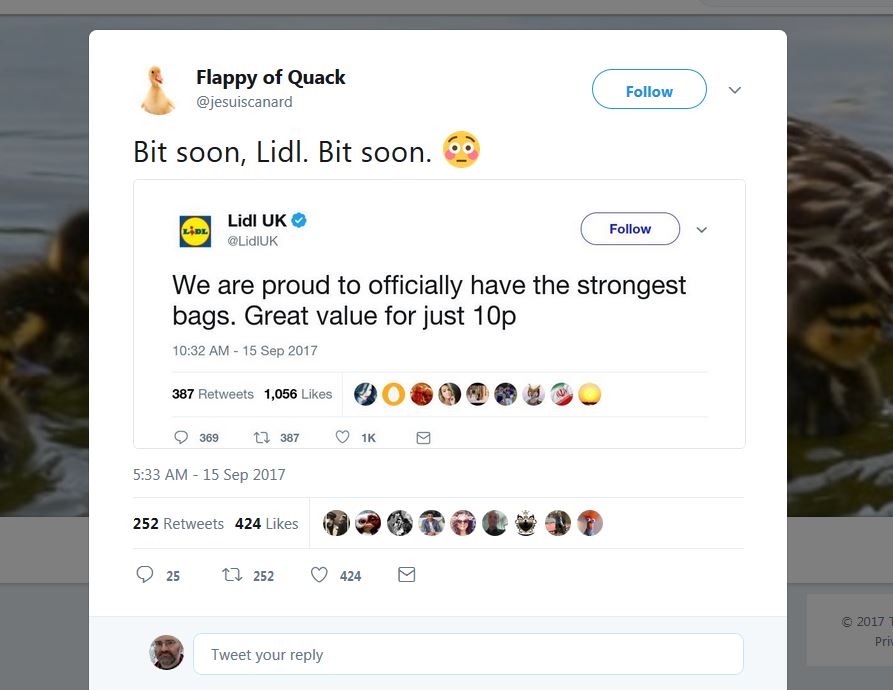

The smoke had hardly cleared from the bomb attack this morning at London’s Parsons Green subway station before someone was demonstrating this all over again (see above).

In brief:

The bomber used a shopping bag from Lidl, a discount grocery chain; the bomb didn’t go off properly, so the bag was left singed but intact; a troll wanted to seed the idea that an overzealous social media person at the company had used this to brag about how tough their bags were (and then had thought better of it). The result looked like this (or see above.)

The production standards are higher than for some fake tweet screenshots out there. The creator didn’t go over 128 characters (your browser will let you cut and paste War and Peace into a tweet if you feel like it) and the embedded image looks almost exactly like a retweet (except that the likes and retweets of the original tweet don’t show in the retweet.) But pretty convincing, on the whole.

The original tweet was quickly imitated. Like the first one, the troll posed as an offended bystander:

In fake news news:

- CNN has a long read about our old friends in Veles, Macedonia, where fake news is a is a cottage industry. The town now has a fake news instructor, who explains that “There is a large community of young people there … and there is nothing else to do.” Macedonia’s national government finds the whole thing embarrassing, but the town’s mayor calls fake news “your way of producing really quick money.”

- In Buzzfeed, Craig Silverman suggests the true point of using Facebook’s ‘disputed by third party fact-checkers’ label on dubious stories. It’s not really for humans, at least not directly — Facebook’s algorithm responds to it in a way that means that fewer people see it.

- Ad buyers on Facebook don’t have to deal with a human (see below) which means, among other things, that shadowy political advertising may still exist on the social networking service, despite revelations earlier this month that a troll farm in St. Petersburg, Russia spent US$100,000 in political ads related to the U.S. election last year.

- On Thursday, ProPublica reported that Facebook had several ad categories catering to anti-Semites. The screenshots in the story are quite remarkable. (Last year, ProPublica was able to design a Facebook ad for real estate that would only be seen by white people, a violation of U.S. federal civil rights laws.)

- Slate responded to ProPublica’s story by seeing how far they could push the concept. Pretty far, it turns out — Facebook will happily approve targeted ads aimed at neo-Nazis and KKK sympathizers. “Facebook’s tool initially said our audience was too small, so we added users whom its algorithm had identified as being interested in Germany’s far-right party … That gave us a potential audience of 135,000, large enough to submit, which we did, using a $20 budget. Facebook approved our ad one minute later.” The problem, of course, is not that a human is making bad decisions, but that no human is making any decisions.

- Not to be left behind, Buzzfeed tried a similar experiment, this time on Google, trying to organize ad buys targeting people using specific search terms involving racism and genocide advocacy. It had similar results: “BuzzFeed News’ campaign was largely made up of keywords suggested by Google’s ad buying platform, which seemed to go the extra mile to make sure all angles of certain racist or bigoted ad buys got covered.”

- “Shouldn’t the roughly 80 per cent of Americans who use Facebook know as much as possible about how they’re being remotely manipulated?,” The Intercept asks. “(CEO Mark) Zuckerberg should publicly testify under oath before Congress on his company’s capabilities to influence the political process, be it Russian meddling or anything else. If the company is as powerful as it promises advertisers, it should be held accountable.”

- Russian entities have at least tried to organize anti-immigrant rallies in the United States using Facebook, the Daily Beast reports. (It doesn’t seem to have worked all that well.)

- White-hat hackers in Germany claim that election software there “is susceptible to various external attacks, including those that could secretly modify vote totals before they are reported to electoral officials,” SC Magazine reports. Germans vote in national elections on September 24.

- In an epic case of victim-blaming, a Russian news show told its viewers recently that Poland started the Second World War in 1939. (Stopfake.org, a Ukrainian site puts the lie in the context of ongoing Russian sensitivity about the 1939 Molotov-Ribbentrop Pact, in which the Nazis and Soviets agreed to invade and divide Poland.)

- Shark fins weren’t seen in the flooded streets of Miami during Hurricane Irma, but if you’d like to pretend otherwise you will enjoy this video.

Comments