Canadian airports are using facial recognition at border crossings. Heathrow Airport in London is planning on using it to replace boarding passes. In China, bathrooms are using it to prevent the theft of toilet paper.

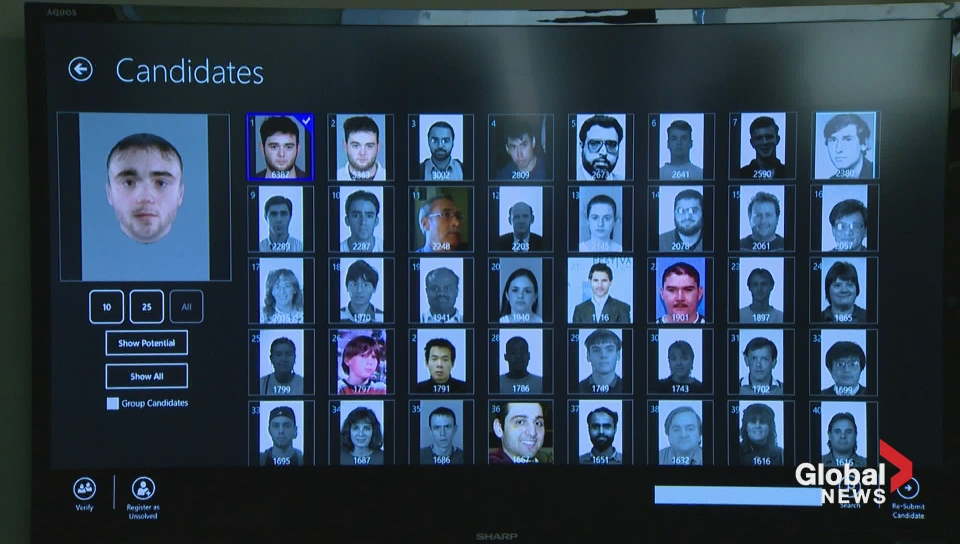

In the U.S., the FBI is using it to solve crimes – with a database that accounts for around half of adult Americans.

That’s over 117 million people, but not all of them are criminals … and some are worried about the implications of that, the Guardian reported.

“Facial recognition technology is a powerful tool law enforcement can use to protect people, their property, our borders, and our nation,” Jason Chaffetz, chair of the oversight committee on the subject, said at a hearing on earlier this month.

“But it can also be used by bad actors to harass or stalk individuals. It can be used in a way that chills free speech and free association by targeting people attending certain political meetings, protests, churches, or other types of places in the public.”

READ MORE: Facial recognition technology to appear at Canadian airports this spring

Get daily National news

The hearing outlined how many Americans aren’t aware that their pictures may be in the database, and how inaccurate or false positives could lead to racial bias.

The FBI built their database using photos from driver’s licences from 18 different states, The Guardian reports.

That’s something that’s not allowed in Canada, as the police found out after the 2011 Stanley Cup riots in Vancouver.

The Insurance Corporation of British Columbia offered their database of drivers licence to police, but the BC Privacy Commissioner ruled that the insurance company shouldn’t have granted access to police without a warrant.

READ MORE: Fake news: No, strangers can’t find you on Facebook by taking pictures of you on the street

In Canada’s Privacy Act, “federal government institutions can use personal information for the purpose for which the information was collected or for a use consistent with that purpose,” Office of the Privacy Commissioner wrote in a report.

“Apart from some limited and specific exceptions, the consent of the individual must be obtained for any other use of the information.”

But that’s not what’s happening in the U.S. In 2016, the government accountability office analyzed the FBI’s Next Generation Identification Program and found it was lacking in oversight, which led to the recent hearing.

READ MORE: Microsoft handed over email data to UK authorities after London attack

Racial bias

Along with privacy concerns, the hearing outlined the accuracy of the FBI’s software – which is wrong around 15 per cent of the time.

While that might be worrying on its own, the Guardian notes the algorithms are less accurate when dealing with African Americans.

“Innocent people could bear the burden of being falsely accused, including the implication of having federal investigators turn up at their home or business,” Diana Maurer of the government accountability office said at the hearing.

Comments

Want to discuss? Please read our Commenting Policy first.